Evidence of Impact: What Actually Changed When a School Redesigned Learning

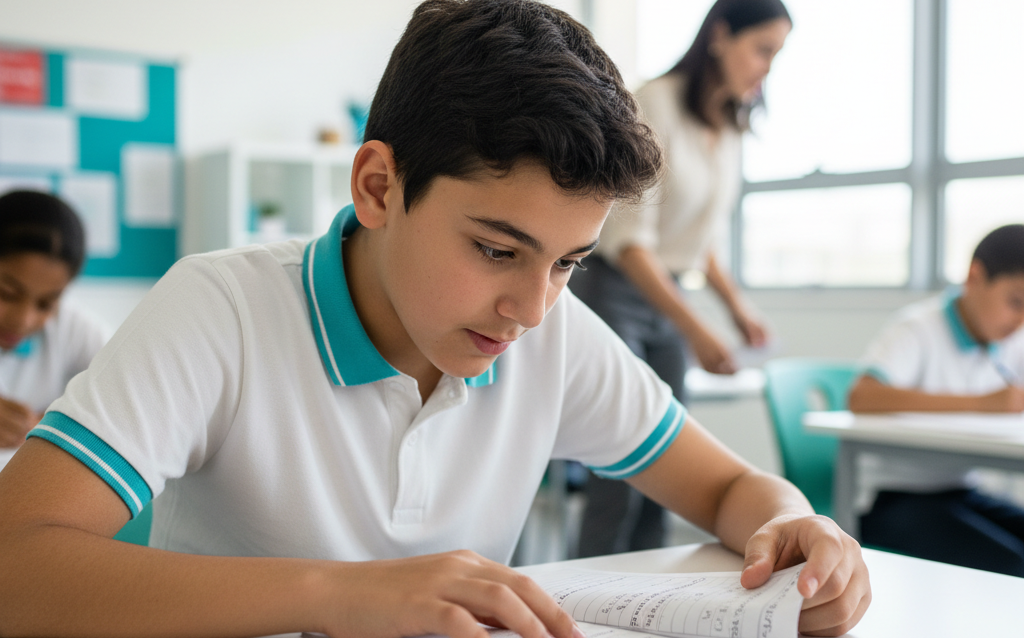

Three weeks into the term, something unexpected happened at Flexi Academy.

Students started asking to see their graded work again.

Not to negotiate for extra marks.

Not because a teacher required corrections.

But because they genuinely wanted to understand what they had misunderstood — and how to improve it.

For many teachers, that was the first signal that something real was shifting.

This wasn’t a new reward system.

It wasn’t a motivational campaign.

It wasn’t smaller classes or extra tutoring.

It was a structural change in how learning was organised.

Here’s what happened.

A Full-School Shift — Not a Pilot Bubble

The 6Ds instructional framework was implemented across the entire school for one academic term:

– 450 students

– 30 teachers

– Grades 1 through 12

– English, Mathematics, Science, Humanities

No reduced class sizes.

No additional staffing.

No timetable redesign.

Teachers were trained to clarify learning intent earlier, embed short diagnostic checkpoints during lessons, and build structured reflection before and after assessment.

In other words, learning became a cycle — not a sequence.

Then we measured what changed.

Engagement Increased by 40% — But the Numbers Came After the Signals

Survey data showed a 40% increase in student engagement across curiosity, persistence, collaboration, and self-reflection.

But teachers noticed it before the surveys confirmed it.

– Students stayed with harder questions longer.

– Participation spread beyond the usual confident few.

– Fewer reminders were needed to stay on task.

One teacher summed it up:

“They didn’t wait for me to tell them what to do next.”

At the start of the term, classroom dynamics were typical — a small group driving discussion while others followed.

By the end, more students were contributing ideas, questioning assumptions, and revisiting earlier work without prompting.

The difference wasn’t louder classrooms.

It was more intentional ones.

When students understand what they’re aiming for — and get regular chances to check whether they’re getting there — engagement stops being compliance. It becomes ownership.

Academic Performance Rose by 18% in One Term

Before the framework was introduced, the average academic score across the cohort was 68.2.

After one term, it rose to 84.1.

That’s an 18% improvement — statistically significant, with a large effect size.

Now, numbers like that deserve caution. Gains of this size are uncommon in education, particularly across an entire school. Assessments were aligned to existing internal benchmarks to maintain consistency, and statistical thresholds were deliberately strict (p < .01).

Even so, the magnitude surprised us.

In many schools, improvements of this scale are associated with small-group interventions or multi-year reforms.

Here, it occurred across all year levels within one term.

What changed wasn’t content.

It was timing.

Assessment stopped being the end of learning and became part of learning. Students were expected to identify gaps before moving forward. Teachers adjusted instruction based on evidence in real time, not after the unit was over.

Feedback arrived while it could still change something.

That difference matters.

Teacher Satisfaction Increased by 35% — Even Though Teaching Got Harder First

Interestingly, teacher satisfaction rose by 35% over the term.

But the early weeks weren’t easier.

Several teachers reported that lessons required more deliberate planning and more in-class decision-making. The shift toward embedded diagnostics meant paying closer attention to learning signals.

So why did satisfaction rise?

Because guessing decreased.

Teachers described having clearer visibility into:

•what students truly understood,

•where misconceptions were forming,

•and when to slow down or accelerate.

One teacher wrote:

“I stopped guessing where the class was. I could see it.”

That clarity changed the emotional equation of teaching. Effort felt more connected to impact.

And when effort translates visibly into progress, professional energy rises.

The Compounding Effect

What stood out most wasn’t any single metric. It was how they reinforced each other.

As engagement increased, students reflected more honestly on mistakes.

As reflection improved, academic performance strengthened.

As results improved, confidence grew.

And confidence fed back into engagement.

It became a loop:

Engagement → Reflection → Improvement → Confidence → Engagement

Parents noticed it too. Students began explaining why they chose certain strategies instead of simply reporting answers.

That shift — from describing answers to describing thinking — signals something deeper than short-term test gains.

It signals learning that sticks.

It Wasn’t Frictionless

No structural change is.

Two adjustments were needed early on:

1.Reflection initially took longer than expected. Teachers shifted to shorter, more frequent check-ins instead of extended sessions.

2.Some assessment rubrics needed refinement to align more clearly with diagnostic phases.

Neither required abandoning the framework. They required calibration.

That’s normal in real schools.

What This Case Actually Shows

Across one academic term in a fully functioning K–12 school:

•Engagement increased by 40%

•Academic performance improved by 18%

•Teacher satisfaction rose by 35%

Those are measurable outcomes.

But the deeper finding is structural:

When diagnosis and reflection are embedded — not added on — improvement compounds.

This wasn’t about working harder.

It was about reorganising how learning responds to evidence.

And when learning responds faster, outcomes shift faster.

A Grounded Takeaway

This is one school. One term. One implementation.

It is not a universal claim.

But it does demonstrate something important:

When the learning cycle itself changes, behaviour changes.

When behaviour changes, results follow.

And when results reinforce the cycle, progress accelerates.

The lesson isn’t that transformation requires more resources.

It’s that structure determines what resources can actually achieve.